Large language models (LLMs) have rapidly evolved from research curiosities into business‑critical tools. Off‑the‑shelf models can already draft marketing copy, summarise long reports and generate code snippets, but they lack the context and nuances that make an organisation unique.

Fine‑tuning offers a solution: it adjusts a pre‑trained model on a focused dataset so it better understands your domain, brand voice and workflow constraints.

Why Fine‑Tune?

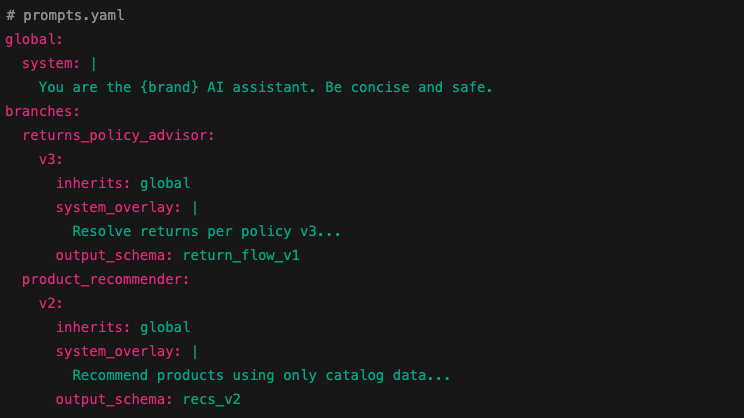

Fine-tuning customises a base model with examples from your data. Unlike prompt engineering, which provides task instructions at run‑time, fine‑tuning permanently alters the model’s weights to internalise new patterns.

The result is more reliable outputs, shorter prompts and better alignment with your brand voice. For enterprises, fine‑tuning unlocks:

Domain‑specific expertise: Models trained on general web data may not grasp sector jargon, regulatory language or specific product details. Fine‑tuning on proprietary documents can dramatically improve comprehension and relevance.

Consistency and tone: Fine‑tuned models can produce responses that match brand style guidelines or legal requirements, reducing the need for manual editing.

Shorter, cheaper prompts: When a model already “knows” your domain, prompts can be streamlined. This lowers token usage and latency.

Better control: Fine‑tuned models are less prone to drift into unwanted directions, especially for sensitive tasks such as policy draughting or customer support.

Fine‑Tuning vs. Other Customisation Approaches

Before jumping into fine‑tuning, enterprises should understand alternative strategies:

Prompt engineering: Crafting detailed instructions for the model to follow. It’s cost‑effective and quick to iterate but can hit limitations as tasks grow complex.

Retrieval‑augmented generation (RAG): Supplying external data via search or database queries at inference time. It keeps proprietary data separate from model weights but introduces latency and requires prompt handling of retrieved results.

Full model training: Training from scratch on your corpus. This offers maximum control but is prohibitively expensive and slower to iterate.

Fine-tuning sits between prompt engineering and full training. It adapts a model to your domain while leveraging the foundation model’s general capabilities.

Data Requirements

Fine‑tuning quality hinges on the dataset. Enterprises should consider:

Dataset size: As few as 100-500 examples improves simple tasks. For more complex behaviours or highly specialised language, thousands of examples may be necessary. Start small and scale as needed.

Quality over quantity: Data should be curated to reflect correct and desired outputs. Avoid ambiguous or conflicting examples. Consistency across examples helps the model generalise.

Format: Input-output pairs are typical (for example; customer question → answer). Include the prompt and ideal response in each example.

Diversity: Cover edge cases and varied phrasing so the model doesn’t overfit to narrow patterns.

Privacy and compliance: Ensure sensitive information is redacted or anonymised. Establish data governance controls before using proprietary documents.

Techniques and Tools

Fine-tuning approaches differ in how much of the model’s weights are updated:

Full Fine-Tuning

All model weights are adjusted. This yields maximal improvement but requires significant computation and risk of overfitting. It’s recommended only when the dataset is large and well‑curated.

LoRA/PEFT Methods

Low‑rank adaptation or parameter‑efficient fine‑tuning updates only a small subset of weights via adapters. It reduces compute cost and time, making it attractive for enterprises running on limited infrastructure.

Instruction Fine‑Tuning

Training on examples where the model is shown how to follow instructions precisely. This improves task following and reduces hallucinations.

Vendors such as Cohere, OpenAI and Anthropic support fine‑tuning via API with parameter‑efficient methods, making it accessible to companies without dedicated machine‑learning teams.

Benefits and Trade‑Offs

Fine‑tuning delivers measurable gains but also introduces new complexities.

Benefits

-Performance improvements: Fine‑tuned models can reduce prompt sizes and achieve higher accuracy on domain‑specific tasks. In practice, enterprises have reported better summarisation quality, improved tone matching and enhanced dialogue coherence.

-Efficiency: Models need fewer tokens and less post‑processing, lowering inference costs.

-Reduced hallucinations: When the model has explicit examples, it is less likely to fabricate answers. Adding correct outputs for tricky scenarios helps the model learn when to say “I don’t know.”

Trade‑Offs

-Compute cost and time: Training runs can be expensive, especially for full fine‑tuning. Parameter‑efficient methods mitigate this, but budgets must still account for training hours.

-Overfitting: Fine‑tuned models can become brittle if the dataset is too small or biased. They may perform poorly on tasks outside the fine‑tuned scope.

-Maintenance burden: Domain knowledge evolves. Fine‑tuned models must be retrained or augmented to stay current, introducing operational overhead.

-Safety and compliance: Adjusting weights could inadvertently amplify biases or generate disallowed content if the training examples contain problematic language.

Implementation Advice

Define objectives: Clarify why you need fine‑tuning. Identify tasks where prompt engineering or RAG falls short and articulate performance metrics (accuracy, token usage, latency).

Start small: Begin with a pilot project. Use a small dataset to finetune a model via parameter‑efficient methods. Measure gains and gather user feedback.

Iterate and evaluate: Test the fine‑tuned model on held‑out data and real use cases. Compare against baseline prompts and RAG workflows.

Monitor and refine: Establish monitoring to detect drift or deterioration. Plan for periodic retraining as data or requirements change.

Invest in data pipelines: Build processes for data collection, annotation and review. High‑quality training data is the foundation of successful fine‑tuning.

Prioritise safety: Include negative examples to teach the model what not to do. Engage legal and compliance teams early.

Summary – Fine Tuning LLMs

Fine-tuning large language models is becoming a mainstream technique for enterprises seeking to maximise the value of AI.

By teaching a model your organisation’s language, examples and policies, ai developers can deliver more relevant, on‑brand and efficient AI experiences.

As of September 2024, the tools and best practices around fine‑tuning have matured. By starting with clear objectives, investing in high‑quality data and embracing parameter‑efficient training methods, enterprises can customise LLMs to meet unique business needs while managing risks.